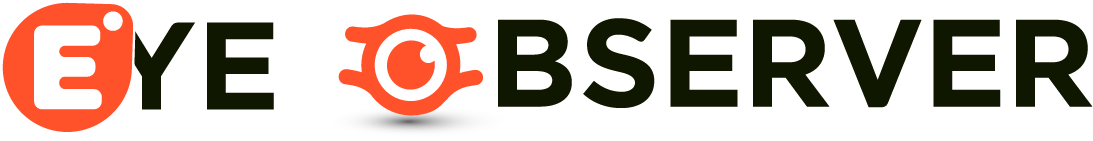

Spotify Wrapped is back. After last year’s widely criticized flop that included an AI podcast as its highlight, the streamer’s highly anticipated annual review feature has returned to its roots. This year, Spotify is doubling down on what it knows works best: deep dives into your streaming data, creative experiences, messages from favorite artists, and other social features.

The company claims that Wrapped 2025 is its biggest, as it’s introducing nearly a dozen new features in addition to its old standbys, like top songs and artists. Plus, it’s offering more visibility into users’ data than in years past. For the first time, Spotify Wrapped is adding a live multiplayer feature to compare your listening data with friends.

Wrapped Party, Wrapped’s first live interactive experience, allows you to invite up to nine friends to compare listening stats.

Also new this year, your Top Songs Playlist will include the play counts for each of the top songs, so you can actually see how much time you spent with your favorite tracks.

Other standout features this year include an interactive Top Song Quiz, a Listening Age feature, and Wrapped Clubs, which match you to one of six unique listening styles.

The company believes these additions will not only bring back the personalized, engaging experience that users have long expected from Wrapped, but will take it a step further by making it more interactive than before.

In the Top Song Quiz, for instance, you can try to guess which top song soundtracked your year before seeing the results.

Techcrunch event

San Francisco

|

October 13-15, 2026

The new interactive Wrapped Party feature isn’t just about comparing the personal streaming data you’ve already received to your friends’ data, as that’s something people already do on social media. Instead, the feature presents unique data stories for your group, like who’s the “most obsessed fan,” the “early bird,” the most “picky listener,” or even something as nice as the “dinner table explainer,” meaning the person who listens to the most news podcasts.

Spotify says these awards update dynamically every time you join a Wrapped Party, so no two sessions are ever the same — even if you run through them again with the same group of friends.

The new Wrapped Clubs, meanwhile, will group you into one of half a dozen listening styles, like the “Soft Hearts Club,” the “Club Serotonin,” the “Full Charge Crew,” the “Cosmic Stereo Club,” and others. You’ll also receive a role in the club based on your listening data. You might be a club leader if your listening choices strongly matches the club’s values, a scout if you’re always seeking out new releases, or an archivist if you listen to music from past eras.

Another feature, Listening Age, compares your 2025 music listening to others in your age group. To calculate your age, the feature considers the release years of the tracks you listen to most. From there, it identifies the five-year span of music that you engaged with more than other listeners your age.

As in prior years, you’ll see your top songs, top artists, top genres, and, for the first time, top albums. If you engaged with audiobooks and podcasts, you’ll see metrics for those as well. Artists, writers, and podcasters will have their own version of Wrapped as before. And top fans will again receive video messages from their favorite artists, podcasters, and, now, authors.

You’ll also receive a playlist of your top songs of the year, as before.

What you won’t find in this year’s Wrapped is any feature that advertises it was made with AI.

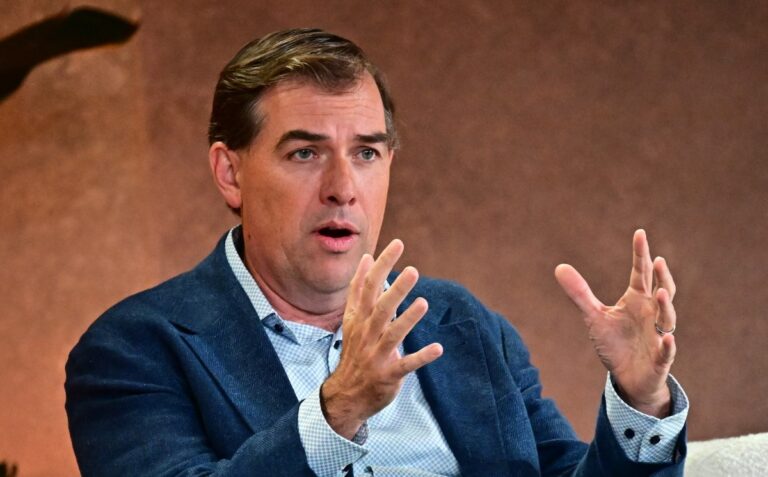

In a press briefing on Tuesday, Spotify’s Senior Director of Global Marketing, Matt Luhks, admitted the company received a “lot of feedback” about its 2024 AI-focused Wrapped experience, saying it was a “mix of positive and ‘more constructive feedback,’” despite the feature driving more engagement than prior years.

“We take all of that in. We use that as information, insights, [and] inspiration for how we approached Wrapped this year,” he said in a press event ahead of today’s launch.

“What our users tell us about Wrapped means a lot to us, so it was really informative in how we approached Wrapped this year. And what we tried to build was the most creative, most innovative, most engaging Wrapped ever,” he added, setting a high bar for the 2025 edition of the now 11-year-old annual year-in-review feature.

“We’re the original and, we believe, still the best,” Luhks said.

Still, AI was a part of the Wrapped experience. Though the company claims the overall experience was not made with AI, it does leverage a LLM (large language model) to add a storytelling layer to Wrapped’s facts and figures, and natural language summaries in other parts of its experience, looking back on your data.

Spotify’s attempt to fix Wrapped after a notable stumble comes as the streamer faces increased competition from Apple, Amazon, YouTube, and others, which have all launched their own annual review features, inspired by Wrapped.

“Everyone seems to have their own version of Wrapped. Now, there’s a lot of reviews and replays and rewinds out there, but we believe that Wrapped still sets the bar for these year-end recaps,” Luhks said.

Along with the consumer experience, Spotify shared its top artists, songs, albums, podcasts, and audiobooks for the year, with top winners that included, respectively, Bad Bunny (top song and album), Joe Rogan (“The Joe Rogan Experience” podcast), and Rebeca Yarros (author of “Fourth Wing”).